Deep sea coral reefs more accessible with touch-sensitive underwater robotic platform

Prof. Voolstra in front of a Remotely Operated Vehicle (ROV) aboard the research vessel Aegaeo pointing to the green collection basket that is attached to a robotic arm to sample deep-sea corals.

Current underwater exploration and monitoring of oceanic resources is both expensive and challenging. It requires human divers who can only explore underwater environments during short periods of time and who can only safely go down to certain depths. Underwater vehicles have proven to be useful for exploring oceans at greater depths, but they lack human dexterity, which is necessary for performing fine manipulation tasks such as collecting samples and in situ experimentation. These underwater vehicles are also large and cumbersome, and their mechanical characteristics make them difficult to operate in closely confined fragile spaces or in turbulent fluid environments.

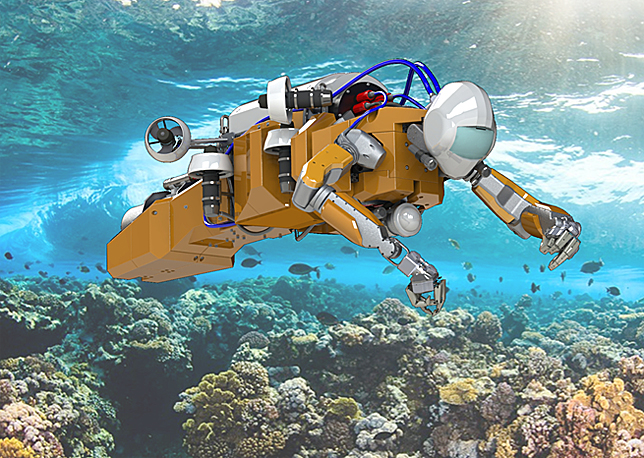

KAUST and Stanford University together with Meka Robotics have been collaborating to design and build a radical new underwater robotic platform to serve as a robotic avatar diver.

To solve this issue, KAUST and Stanford University together with Meka Robotics have been collaborating for the past three years on an ambitious project. The goal of the project, called Red Sea Robotics Exploratorium, is to design and build a radical new underwater robotic platform to serve as a robotic avatar diver.

The research project at KAUST is conducted between the University's Computer, Electrical and Mathematical Sciences and Engineering (CMSE) division, the Red Sea Research Center in the Biological and Environmental Science and Engineering (BESE) division, and the Artificial Intelligence lab team at Stanford University. The KAUST team includes Professors Khlaed Salama, Xabier Irigoyen, and Christian R. Voolstra. Stanford's team is led by Professors Oussama Khatib and Mark R Cutkosky and is under an AEA3 Collaborative Research Grant.

Christian Voolstra, Assistant Professor of Marine Science at the Red Sea Research Center recently sat down with us to provide additional insight into the inner working and science behind the venture.

What makes the deep sea corals unique?

In the Red Sea, the water is never below about 20 degrees Celsius, making the deep sea corals within them special. Regular oceans tend to have colder temperatures at depth, which are in turn nutrient and oxygen rich. In the Red Sea, deep waters are oxygen-deprived, nutrient-depleted, warm and with a high salinity, making it challenging for deep-sea organisms to survive. From two subsequent research cruises to the Red Sea, we found deep-sea corals that were really not expected to be there. This was a world-first and published in Nature's Scientific Reports in 2013, but we also came to realize that our ability to operate in underwater (deep-sea) environments are extremely limited.

What motivated you to build a new underwater robotic platform?

Currently people use a so-called ROV (remote operated vehicle), which is a little submarine with two robotic arms and very limited dexterity. Using the ROV to examine delicate coral colonies proved to be troublesome. Prof. Khaled Salama (who is a professor in KAUST's electrical engineering division) knew people at Stanford University who might be able to aid us in creating a new robotic interface system. We got in touch with the Stanford team and told them that we were not happy with the engineering solutions that are currently available. They said, "Ok, we know how to build robots … why not build an underwater robot?"

This is how the Red Sea Exploratorium came to be.

KAUST and Stanford University together with Meka Robotics have been collaborating to design and build a radical new underwater robotic platform to serve as a robotic avatar diver.

What goes into creating a robot of this kind?

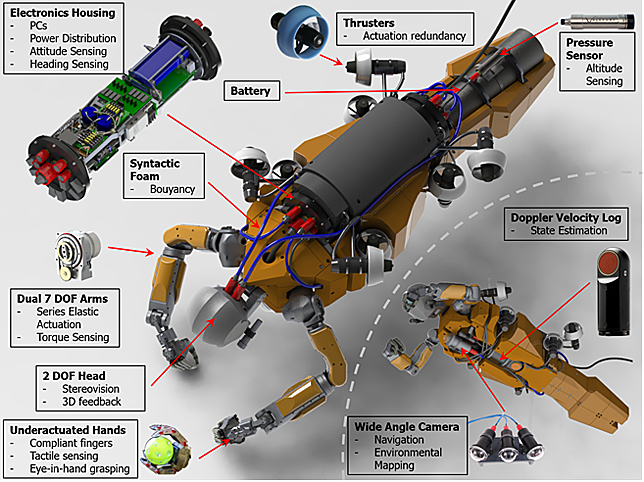

A lot goes into the software development side of artificial intelligence and knowing how the robot will move and interact. The Stanford team is pioneers in the field of human-assisted robotics. This means that the robot is capable to autonomously move in a given environment, keep its balance, and counteract the currents under water so the researcher can focus on the task at hand. The controlling interface comes from the field of microsurgery - it's like a glove you put on. Your hands and movements become the little pinching device, and whatever actions you do, the robot will instantly mimic. On top of that you have haptic technology, which is the sense of touch. Another advantage of this intuitive control is that it is very easy to learn for a researcher. Current ROVs require two trained engineers to operate the device. In contrast, with our underwater robot, the connection between what you're doing and the robot is direct and instantaneous.

How far along are you into the creation of the prototype?

Currently the parts are being assembled by the Artificial Intelligence Lab at Stanford University. The robot arms come from MEKA robotics in San Francisco. We really got lucky, as they were acquired by Google recently, and this was the last job they did outside of Google.

When building a device like this, waterproofing becomes a problem because you can only go to a certain depth. This is the first robot where we built the entire parts oil immersed. Oil-immersed means you can go to any depth, as the liquid is almost incompressible. This engineering solution makes the robot waterproof to a depth of two thousand meters.

One of the great things about this project is that it's been a fantastic opportunity for different departments within the University to come together. One department works on the hand, the other on the head, and another works on the electronics, or the underwater implementation.

Because the robot is built in a virtual environment, you can test and work with the robot before it has been assembled. We also built an underwater world for the robot to explore and interact with. In the real world, we have attached the arms and are now in the process of putting it all together. I think this summer will be a realistic date of having the robot in the water.

How impressed are you by the new technology you are helping to create?

The design is very impressive because we managed to assemble all the hardware and electronics in a robot approximately the size of a human diver. This comes with the added benefit that the robot can use the same tools, we as divers use. The first version will probably be tethered to the boat. At a later time we plan to implement an underwater base station that the robot can go back to in order to charge its batteries.,

What percentage of coral reefs remains unexplored due to current technological limitations?

We always have this conformational bias when it comes to observing corals reefs. We think all coral reefs work a certain way. But the reality of it is that we only look at about 25 percent of the coral reef biomass (about the first 30 m in the water column), but there is so much more coral below that depth. This is especially true for the Red Sea, where the water is very clear, so light can penetrate to deeper depths. With this technology you can really rethink how you approach underwater research.

Do you see any other professions that can benefit from this type of technology?

The team envisions that in the near future, we will be able to utilize different robots with the same tele-operation framework for different tasks such as undersea pipeline inspection and repair, mine defusal, aircraft maintenance, the exploration of disastrous areas, as well as rescue operations. This robot provides access to so many novel engineering solutions, which allows you to do things that were not possible before. Now we are truly able to touch and feel the bottom of the ocean. I really think this form of robotics is one of the next frontiers in science.

Do you feel this venture would be possible at any other university than KAUST?

KAUST is unique. You can dream of what initially appears like a blue-sky project. Then a year later you find yourself in a room with people discussing how to move the project forward. Yes, I think it would be hard to pitch it elsewhere. It's not about taking something that already exists and making it better. It's about creating something entirely new. This requires scientific freedom and room and resources for discovery. You are not held back by the boundaries of your scientific discipline as one of the good things about projects like this is that you have people from different fields coming together to help solve a problem. I think it's a fascinating project that highlights what KAUST stands for.

- by David Murphy, KAUST News