Computer vision: Teaching computers how to see the world

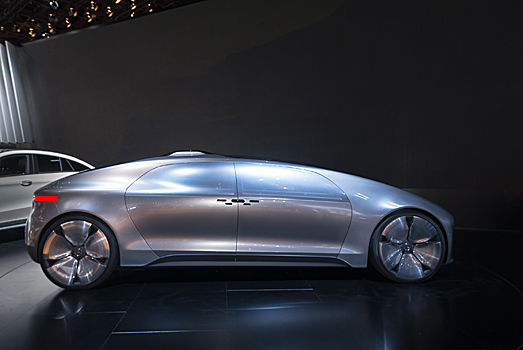

The Mercedes Benz F015 self-driving concept car.

"Our eyes are the equivalent of two cameras," says KAUST Electrical Engineering Assistant Professor, Bernard Ghanem. Much like cameras, human beings essentially record video. We then process these images we intake to make decisions in our day-to-day lives. We know, for example, to stay away from a barking dog, not to touch a hot stove or not to walk onto unsafe surfaces. What if we could teach machines how to do the same thing? In fact, that's what computer vision and machine learning are all about – inputting visual stimuli, classifying the information and making a decision.

Ghanem works in the Visual Computing Center (VCC) and is interested in computer vision, image processing and machine learning. "People in computer vision are trying to teach machines how to see the world the same way we see the world. Seeing not only object color and so forth, but actually understanding semantically what this visual data is all about," he described.

Self-driving cars

One industry in which computer vision is playing an important role today is the automotive sector -- with the advent of self-driving cars. Several auto manufacturers have already developed self-driving concept vehicles and are envisioning commercially viable models in the coming years. Google's self-driving cars are already set for circulating in the streets of California.

"The self-driving cars like Google have visual sensors," said Ghanem. Is the light red, green, or yellow? Because based on that there are traffic rules to follow. "But there are other sensors like range finders and other types of sensors that perceive how far certain objects are. The sensors can also determine exactly what those objects or obstacles are." When faced with a potential collision scenario, the autonomous driving technology in the vehicle must be able to differentiate between a pedestrian, a tree or another car when making a decision to veer the car in whichever direction.

Another company, Mobileye, specializing in computer vision will start introducing autonomous driving technology in certain brands of cars as early as next year for obstacle avoidance and for detecting when a person has started drifting from lane to lane.

Other industry applications

Computer scientists also use computer vision to assist governments, agencies and corporations with such applications as surveillance, security and even marketing. For instance, several airports may use biometric technology to scan travelers' faces for identification purposes.

"What I do specifically is analyze video," said Ghanem. "From video, you can recognize not only objects but you can recognize what those objects are doing." For example, a train station security video can detect if a person is carrying a piece of luggage and suddenly leaves it unattended at a specific location." Detecting this type of information is important for security reasons. Another scenario involves video footage from grocery stores – used as business intelligence. The video algorithms can identify what shoppers interact with and buy after they've purchased a particular item. This can be valuable information for retailers for when they make decisions on where to stock items on the shelves, or where to place advertisements.

This is known as pattern recognition. But in order to detect a reliable pattern, thousands - even hundreds of thousands - of hours of video need to be analyzed. This isn't something that a human can do. Computer scientists need to develop algorithms and use powerful clusters to analyze this big data. "It's not scalable if humans are looking at it. So you need a machine to look at the data efficiently; browse through it, and find the things that you're looking for. This is the type of research that I do," Ghanem explains.

How scientists are improving the technology

Much like parents teach their babies how to identify things like a dog or a cat, computer scientists also teach machines how to recognize objects through examples. Both humans and machines need to be able to see enough of a variety of types of dogs and cats to be able to know, even if they've never seen a German Shepherd before, that it's a dog and not a cat. "So machine learning, a good portion of it, is about how to use data that you're given to learn to make decisions on new data that you're going to receive," said Ghanem.

-By Meres J. Weche, KAUST News